Buzz Words: “Artificial intelligence and Machine learning,” well they are not interchangeable!!

Two technical terms that are changing and making a place in your day-to-day lives without even letting you know about them are Artificial Intelligence and Machine learning. If you are a developer, you might have been using these concepts. But often, they are used interchangeably, creating confusion and leading to false assumptions. These terms are not similar and are very different from each other. At eSparkBiz, we are here to make you aware of the differences between Artificial Intelligence and Machine learning.

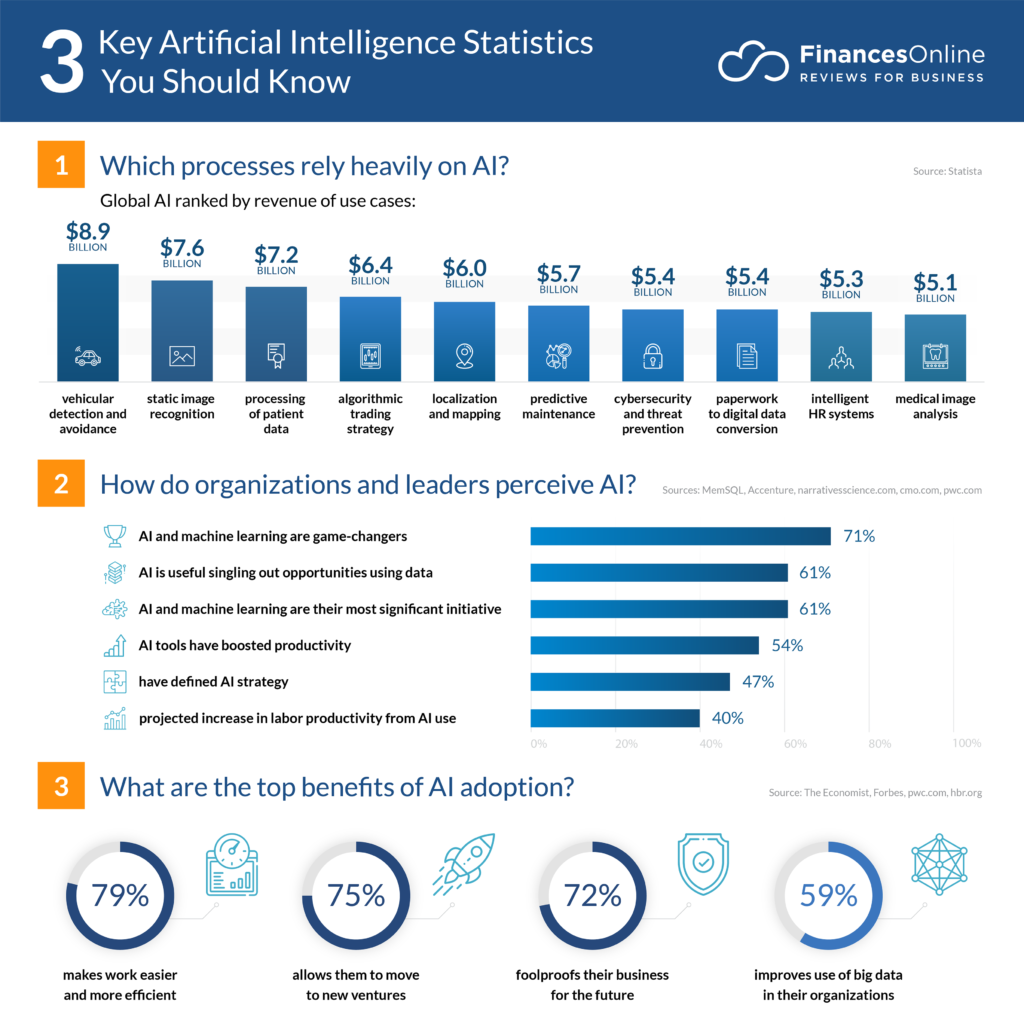

According to the Finances Online report, below is the statistical report for the year market of AI and ML:

What is Artificial Intelligence?

Artificial Intelligence is the implementation of intelligence found in human beings into machines like computers or robots to function more efficiently. It in turn, helps the machines to perform complex tasks by learning, experiencing, and evolving.

In other words, Artificial Intelligence helps you to have machines that are smart like humans, easing out the process of decision making and problem-solving.

Examples of Artificial Intelligence

Have you ever wondered how

- your social media feeds are being personalized as per your likes and dislikes?

- financial institutions are tracking fraudulent transactions so easily?

- Are Google Maps giving you live updates about the traffic on your travel route?

- your email account is filtering spam emails without even letting you know?

- Plagiarism checking tools are catching the duplicated/copied content?

The answer to the above questions is the effective implementation of Artificial Intelligence to ease your day-to-day activities.

Types of Artificial Intelligence

1. Artificial Narrow Intelligence/ Weak AI

Weak Artificial Intelligence simulates human cognition/intelligence into machines that are limited to a narrow area. It is goal-oriented and automates time-consuming tasks.

This type of artificial intelligence can only simulate human behavior within a specific range or limit of parameters. The machines with weak AI require constant human interference.

Narrow Artificial Intelligence is programmed to perform tasks that involve interacting with humans in a personalized way. This is done by using natural language processing or NLP, which interprets human text and speech.

2. Artificial general intelligence/ Strong AI/ True intelligence

Machines with general intelligence replicate human behavior and intelligence by learning and applying them for problem-solving. Strong AI implements the theory of a mind AI framework that trains machines to understand humans on a deeper level.

However, this type of AI is only present in the theoretical form. If researchers can develop this AI, it will require machines to have human-level intelligence to think, understand and act in the same way as humans.

3. Artificial Superintelligence (ASI)

Artificial superintelligence is the AI where machines not only simulate human behavior but also they become self-aware and might become superior to humans in terms of intelligence.

This artificial intelligence is expected by the developers and scientists to be better at everything you do as humans. You might be surprised to see machines having higher problem-solving capabilities and decision-making skills than humans in future. This has become possible due to the endless possibilities of Python and the Best Python Development Companies that are leveraging it.

This concept sees AI evolve at a level where machines will develop to have emotions, desires, and beliefs of their own. You can relate it with robots taking over the world, as shown in sci-fi movies.

What is Machine Learning?

It is a subtype of artificial intelligence and a subset of computer science that involves the method of self-learning and applying statistics over the data provided to perform tasks without human interference.

It comprises two stages:

- Machine learning structure- take data/inputs and observe/learn from the database.

- Machine learning impact- Use cases (example-facial recognition).

For your ease-

“Machine learning is a concept that makes machines intelligent, without the need to teach them about how to behave. For this purpose, machines identify various patterns in the given data and learn from it. Further, the machines implement these learnings to perform the new tasks.”

Examples of Machine Learning

- You might have used google’s speech recognition that converts your speech into texts that save your time without letting it waste in typing.

- You might have also seen tools and techniques used in the medical diagnosis of a disease, patient monitoring, and prediction of disease progression.

These are all real-world examples of machine learning implementation.

Types of Machine Learning

1. Supervised Learning

When the machine is learning from the given data and is being supervised or guided by a teacher it is called supervised learning. The teacher here refers to a given set of data that trains the machines to make predictions and decisions whenever a new set of data is given to it.

The given data is fed into the learning algorithm in the form of examples with labels. It means you provide lots of information about a case along with the outcomes known as labeled data. It allows the algorithm to predict the label for all the examples. To let the machine know, whether the prediction was right or not, feedback is also provided.

With time, the algorithm then starts learning to predict the right label for each example. It is often referred to as a concept based on task orientation, as Supervised learning highly focuses on performing a single task with high accuracy.

2. Unsupervised Learning

Unlike supervised learning, this concept implements feeding of the algorithm with unlabeled data and is given tools to understand the characteristics of the data. The machine then learns to organize and group that data and make meaningful information. This grouped or clustered data then helps the human to get a sensible and productive dataset to perform various tasks.

The most exciting feature of this type of Machine learning is that it utilizes tons of unproductive and unlabeled data, makes sense of it, and boosts productivity.

3. Reinforcement Learning

In this learning method, the machine interacts with the environment by using the hit and trial method. The training is done by sending a signal or reward for wrong or right answers. As a result of which the machine starts to produce the best outcomes.

A reinforcement learning algorithm learns from its mistakes just like a human. When placed in different environments, it will initially make a lot of mistakes. But with time, when presented with signals for good and bad behaviors, it learns to stop making the same mistakes.

Difference between Artificial Intelligence and Machine Learning

| S.No. | Artificial Intelligence | Machine Learning |

| 1 | Program machines work like humans. | Teaching machines to understand and think like humans. |

| 2 | Focuses on human intelligence | Focuses on probability |

| 3 | Automates the analytical model building | Makes the analytical model intelligent |

| 4 | Uses statistical models | Uses decision and logic trees |

| 5 | Applications- e-commerce, healthcare, cybersecurity, marketing, media | Applications- Image and speech recognition, Predictions, personal assistant, self-driving vehicles |

Pros and Cons of Artificial Intelligence-

Pros-

- Effective decision-making

- Less prone to errors

- Vast day-to-day applications

- Saves time

- Doesn’t wear easily and can work 24*7.

Cons-

- High cost

- Increases unemployment

- Creative thinking and emotional intelligence are absent

- Doesn’t evolve with time

- Increases dependency on machines/computers

Pros and Cons of Machine Learning

Pros-

- Easily identifies patterns

- Evolves with time

- Highly adaptable to different environments without human intervention

- Vast application

- Handles multiple varieties of data with ease

Cons-

- Error susceptibility is very high

- Time and resource-consuming

- Data acquisition leads to poor accuracy

However, both technologies have their share of benefits and drawbacks. They are the future of the computer science world. It would not be a shocker when you will be witnessing an era where machines dominate the world more than humans.

Also read about: Artificial Intelligence and its Impact on Future of Project Management